Written by: Angela Derrick, Ph.D. & Susan McClanahan, Ph.D.

Date Posted: December 5, 2025 1:52 am

Is AI Biased Against Older Working Women?

A New Study Says Yes, And We’re Not Surprised.

From Mental Load to Machine Bias: Why Middle-Aged Working Women Face Invisible Burdens in the Age of AI

For decades, women, including women in leadership, have held up more than their share of emotional labor. They manage the subtle dynamics of teams, the well-being of families, the expectations of communities, and the cultural weight of being “the steady one.” SpringSource has written previously about this phenomenon: the mental load that disproportionately falls on women, and the toll it takes on their mental health, relationships, and capacity to lead sustainably.

Now, new research from Stanford University adds another layer to this conversation, one that is both startling and deeply validating. It reveals that AI systems, including large language models (LLMs), display measurable age and gender bias against older working women, portraying them as less experienced, less capable, and often literally younger than their male counterparts, even when given identical input.

When emotional labor meets algorithmic bias, older women face a compounded, often invisible burden. This burden affects leadership trajectories, workplace equity, and psychological well-being. This blog explores how these two forces intersect, and what we would like to see change.

When Media Narratives Add Yet Another Layer of Burden

And if emotional labor and AI bias weren’t enough, we also have to confront headlines like the recent New York Times opinion piece, “Did Women Ruin the Workplace?” This title stayed up just long enough to attract over a thousand furious reader comments, according to activist and leading voice on women’s empowerment, Reshma Saujani, before the paper quietly softened it to the still controversial “Did Liberal Feminism Ruin the Workplace?”.

That article, like so many cultural takes, frames women’s presence, adaptation, or needs as the problem, rather than examining the systems that refuse to evolve.

It ignores:

- the decades of invisible emotional labor women have contributed

- the cultural norms that keep women responsible for everyone’s well-being

- the structural inequities that women navigate daily

- the research showing that the workplace wasn’t “ruined,” it was never built with women in mind

Reshma Saujani highlights a telling moment when the New York Times interviewer Ross Douthat admits he worried that taking his full paternity leave would make him “fail as an employee,” that caring for his newborn would harm his competitiveness. As Saujani notes, that flash of panic is the everyday reality for working women. What he felt briefly, women navigate constantly: a system that punishes caregiving and frames basic survival decisions as “choices” they never truly had. Most women aren’t debating whether to work or stay home. Like most men, they have to work, all while providing the unpaid care the entire economy relies on. As Saujani makes clear, the scandal isn’t that women want both career and family; it’s that the system demands both and supports neither.

Pairing that narrative with Stanford’s AI findings reveals a troubling pattern: Women, especially older women, are simultaneously doing more work and being blamed, erased, or misrepresented for doing it.

And now, even the algorithms meant to “streamline” progress are recoding the same old misogyny and ageism into the next generation of tools.

The Mental Load: The First Invisible Burden

SpringSource’s recent blog on emotional labor and gender roles laid out what many women already know:

Women are the default managers of relational harmony, workplace dynamics, and family well-being. This work is essential—and often unconscious—but is rarely acknowledged, compensated, or shared equally.

Women carry:

- Relational and emotional caretaking at work

- Scheduling, anticipating, and predicting caretaking at home

- Smoothing conflict, managing moods, remembering everything

- Holding the emotional temperature of a workplace or household

This “invisible load” accumulates over decades, especially for women in leadership who are implicitly or explicitly expected to act as the steadying, nurturing center for everyone around them.

And yet, despite all this work, women often face structural forces that minimize or undermine their expertise.

Which brings us to the second invisible burden.

The New Research: When AI Misrepresents Older Working Women

According to Stanford’s 2025 research, AI systems trained on large datasets repeatedly:

- Portray older male professionals as experienced, competent, and appropriately aged

- Depict older female professionals as younger, less experienced, or less authoritative

- Generate resumes that subtly downgrade older women’s achievements

- Assign lower status or leadership potential to women compared to men with the same background

In other words: Even synthetic representations reinforce real-world bias.

This matters because AI is increasingly used in hiring, leadership assessment, corporate evaluations, resume tools, professional imagery, and performance reviews. When the input (your experience) is identical, but the output (your perceived value) changes based on gender and age… the system is not neutral.

This creates a landscape where older women, already carrying emotional labor, must also navigate a biased technological system that diminishes the very expertise they have spent decades building.

Where the Mental Load and AI Bias Meet: The Double Bind

When we put these ideas together, a troubling picture emerges:

Older women have done more emotional labor, yet are perceived as “less experienced.”

Women who have spent decades managing teams, households, and crises, skills that directly fuel strong leadership, are algorithmically portrayed as younger and less seasoned.

Emotional labor drains capacity; AI bias blocks advancement.

The mental load saps energy and time.

AI discrimination blocks opportunities and recognition.

Together, they produce burnout, stagnation, and inequity.

The psychological impact is real.

When your lived experience is erased or minimized by both culture and technology, it creates:

- chronic stress

- self-doubt

- isolation

- identity disruption

- internalized bias

- the sense that your contributions are invisible

These are not personal failures. They are systemic realities.

Highlighting This Work: SpringSource’s December 10 Presentation

This December, SpringSource leaders are bringing these issues into the spotlight.

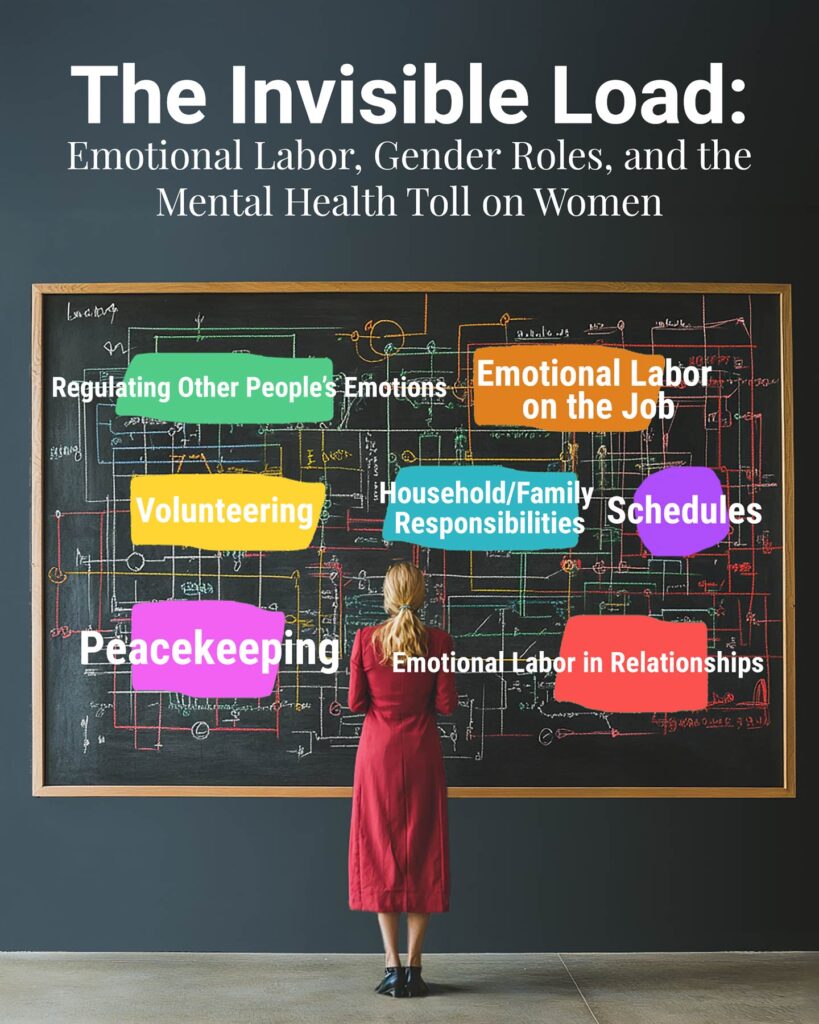

🗓️ “The Invisible Load: Emotional Labor, Gender Roles, and the Mental Health Toll on Women”

Presented December 10, 2025

By:

- Dr. Susan McClanahan, Ph.D., Co-founder of SpringSource: Eating, Weight & Mood Disorders

- Dr. Angela Derrick, Ph.D., Co-founder of SpringSource: Eating, Weight & Mood Disorders

- Jamie Kelly, LCPC, CEDS-C

- Katherine “Kat” M. Zwick, LCPC, CGP-S, CCCS

This talk for female superintendents will explore:

✨ What emotional labor is and why it’s undervalued

✨ How gender roles reinforce invisible expectations

✨ The mental health toll: burnout, anxiety, isolation

✨ How to redistribute emotional labor at home & work

✨ How leadership norms must evolve

✨ How systems, not women, must change

It is exactly the kind of conversation the Stanford research suggests.

If emotional labor has been invisible and internal, AI bias has been invisible and external.

Together, they show us exactly where women are getting squeezed.

If you would like more information on the December 10th talk, please fill out our contact form.

What We Would Like to See Change: A New Framework for Supporting Women

1. Value emotional labor as a leadership skill.

Emotional labor is not “soft.”

It is operationally essential.

Organizations should name it, measure it, and distribute it.

2. Conduct age and gender bias audits, especially in AI tools.

Companies cannot assume their automated tools are neutral.

They must check.

3. Add psychological support for women facing dual burdens.

Especially older women navigating:

- caregiving

- career pivots

- AI-driven hiring pipelines

- leadership burnout

- identity shifts

SpringSource specializes in this kind of integrative care.

4. Redesign leadership norms.

We need more images of women 40, 50, 60, 70+ leading visibly, both in culture and in synthetic data.

5. Normalize shared responsibility.

At home, at work, in partnerships, in communities:

No one should carry the emotional center of gravity alone.

For HR or Other Professionals Using AI to Screen Resumés

More HR teams are now using AI-driven tools to sort, filter, or rank resumés. But the new research on age and gender bias makes one point painfully clear: AI is not neutral, and it can quietly penalize older women even when their qualifications are identical to those of men.

If you are an HR leader, recruiter, or hiring manager who relies on AI, you are now operating at the intersection of equity, technology, and ethics. The system will not correct itself; you must be the safeguard.

Here’s how bias shows up when AI is used for hiring:

- Assigning lower “fit” scores to older women despite equal experience

- Subtly rewriting or re-weighting resumés to make women seem younger or less senior

- Overvaluing male-coded career paths while diminishing leadership roles held by women

- Misinterpreting résumé gaps, especially those related to caregiving

- Downgrading emotional intelligence, relational leadership, and mentoring, skills women are more often expected to carry but rarely rewarded for

What HR professionals must do to protect equity:

- Demand transparency from vendors about training data and audit cycles

- Conduct internal audits comparing AI rankings to human evaluations

- Check outputs for gendered or ageist patterns, especially among final candidates

- Manually review resumés from women 40+, even if AI ranks them lower

- Disable résumé rewriting features, which often “youth-ify” or soften women’s experience

- Avoid using AI to infer traits like “culture fit,” “likability,” or “leadership potential”

- Document your checks, AI fairness is becoming a compliance issue

If you’re unsure whether your tool is biased, assume it is and repeatedly test it.

HR has always been a gatekeeping function.

Now it is a bias-mitigation function, too.

Older women have already spent decades carrying invisible emotional labor.

They shouldn’t lose opportunities because an algorithm mistakes experience for obsolescence.

🌿 SIDEBAR: How AI Learns Bias and How Women Can Push Back

It’s important to understand that AI systems don’t develop bias out of nowhere. They learn from the world we’ve built, a world that has historically minimized, erased, or misunderstood the experiences of older women. If the data is biased, the system becomes biased. That’s why recent research shows that AI tools often portray older women as younger, less experienced, or less authoritative than men with identical backgrounds.

But here’s the part that matters: you can talk back to the system.

AI responds to the language you use. You can correct it, redirect it, and name the bias when you see it. This isn’t just advocacy, it’s self-protection in a digital landscape that can easily misrepresent your expertise and your leadership.

How to challenge bias when using AI tools:

- Name the issue directly:

“Check this for age or gender bias.” - Ask for equal representation:

“Include older women in your examples and leadership roles.” - Reject stereotypes:

“Rewrite without traits that stereotype women as emotional and men as logical.” - Emphasize authority:

“Portray women 40+ as seasoned experts, not as less capable.” - Correct tone:

“Avoid paternalistic or minimizing language toward women.”

These prompts aren’t demanding, they’re clarifying.

They teach the system how to speak more accurately and affirmingly about women’s lives, especially women in midlife and beyond.

AI may have inherited the culture’s biases. But women, individually and collectively, can help change how the system learns from here.

A Final Reflection for the Women Reading This

If you are an older woman leading, caregiving, building, stabilizing, mentoring, carrying emotional labor, and now navigating technological bias…

It is not your fault that the system under-recognizes your value.

You are not imagining the friction.

You are not “behind.”

You are not fading.

You are operating with invisible weights that men and younger women are often never asked to carry.

Your experience is not just valid, it is essential.

And we are committed to supporting you.

Join the SpringSource Community

Want to stay connected to SpringSource’s work on women’s mental health, emotional labor, leadership, and the impact of AI on identity and well-being?

❤️ Join us by subscribing to our monthly newsletter

📍 Learn more about our December 10 event or request attendance by filling out our contact form here: https://springsourcecenter.com/contact-us/